← Go back

Fewer People, Better Results: What Research Reveals About Team Size and AI

When your business is growing and the to-do list is getting longer, the instinct is to hire. More people, more capacity, more output. It's the scaling playbook that every business owner knows by heart.

But what if headcount isn't the right lever anymore?

Across the technology industry, a different model is emerging. Companies worth billions are being built by teams that would barely fill a conference room. They're not cutting corners or burning people out – they're making a deliberate strategic choice: fewer people, more experience, better tools.

And now, two landmark research studies help explain why this model works – and why AI makes it more powerful than ever.

The Science: Small Teams Have a Structural Advantage

Source: lingfeiwu.github.io/smallTeams/

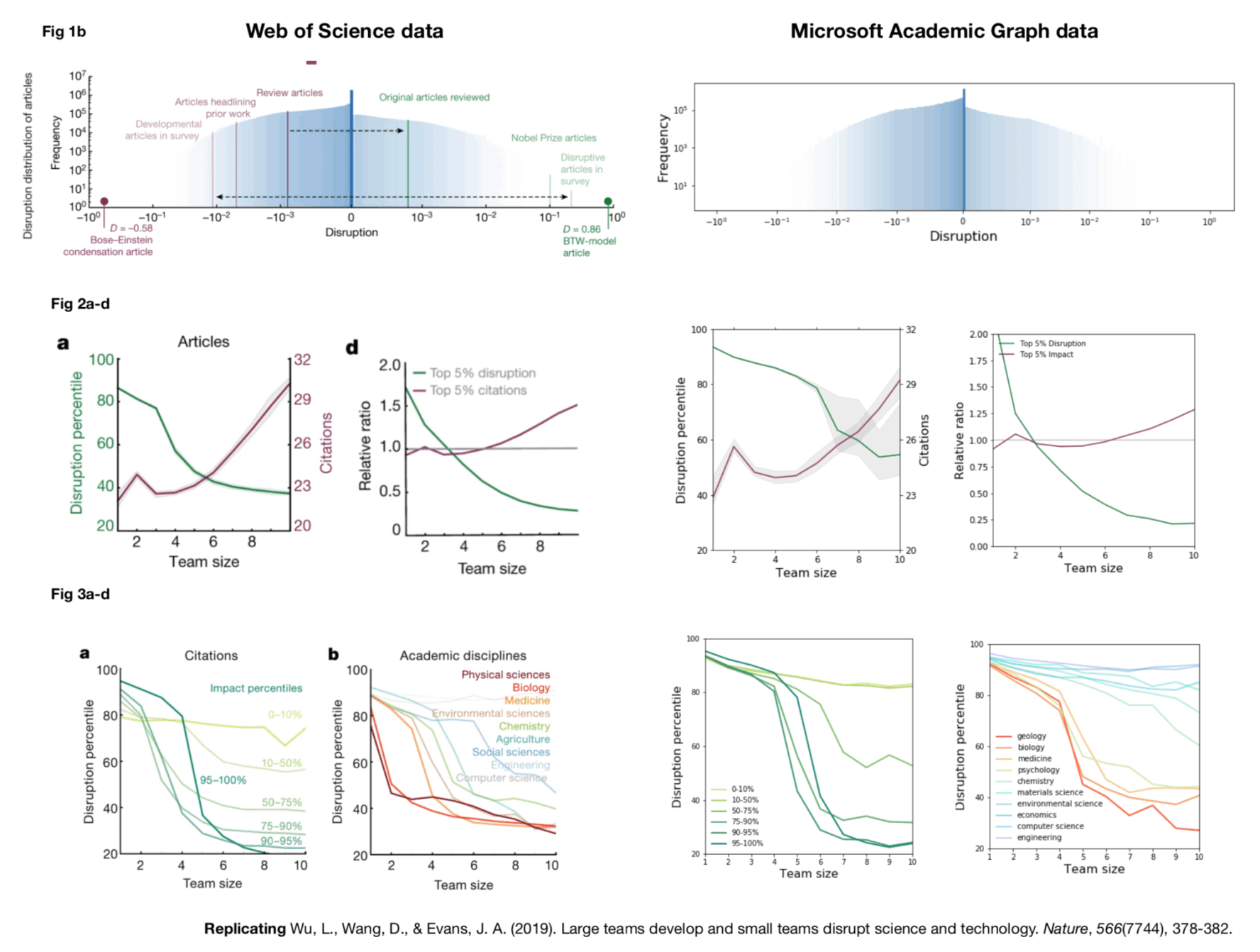

In 2019 – before ChatGPT, before Copilot, before any of the AI coding tools that dominate today's headlines – researchers at the University of Chicago and Northwestern published what may be the most comprehensive study of innovation ever conducted. Published in Nature, the study analyzed over 65 million papers, patents, and software projects spanning six decades (1954-2014). The question was simple: does team size affect the type of innovation a team produces?

The answer was unequivocal. Small teams are far more likely to produce disruptive, novel work – the kind that opens new directions and redefines how people think about a problem. Large teams, by contrast, tend to develop and consolidate existing ideas. They refine what already works. They're good at sequels, not originals.

The data bore this out at every scale. Small teams were more likely to draw on older, less popular ideas and recombine them in unexpected ways. Large teams gravitated toward recent, high-profile work – building on what's trending rather than what's untested. Even Nobel Prize-winning papers, which rank in the top 2% for disruptive impact, came disproportionately from small teams.

James Evans, one of the study's co-authors, put it bluntly: big teams are almost always more conservative, and the work they produce tends to be reactive and low-risk.

This finding matters because it predates the AI era entirely. The structural advantages of small teams – agility, deep context, willingness to take creative risks – are not a product of any particular technology. They're a product of how small groups think and work together. Companies like Pieoneers were built on this conviction long before AI tools existed: that a small team of senior developers, working closely with a client, will build something more original and more focused than a department ever could.

What's changed is that AI has dramatically widened the gap. The same qualities that make small teams better innovators – deep expertise, strong judgment, the ability to hold an entire system in your head – turn out to be exactly what's needed to get the most out of AI tools. The advantage that was already there is now amplified.

The Nuance: AI Amplifies Judgment, Not Just Effort

If the Nature study explains why small teams innovate, a second study explains how AI fits into the picture – and the answer is more nuanced than most headlines suggest.

Source: metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study/

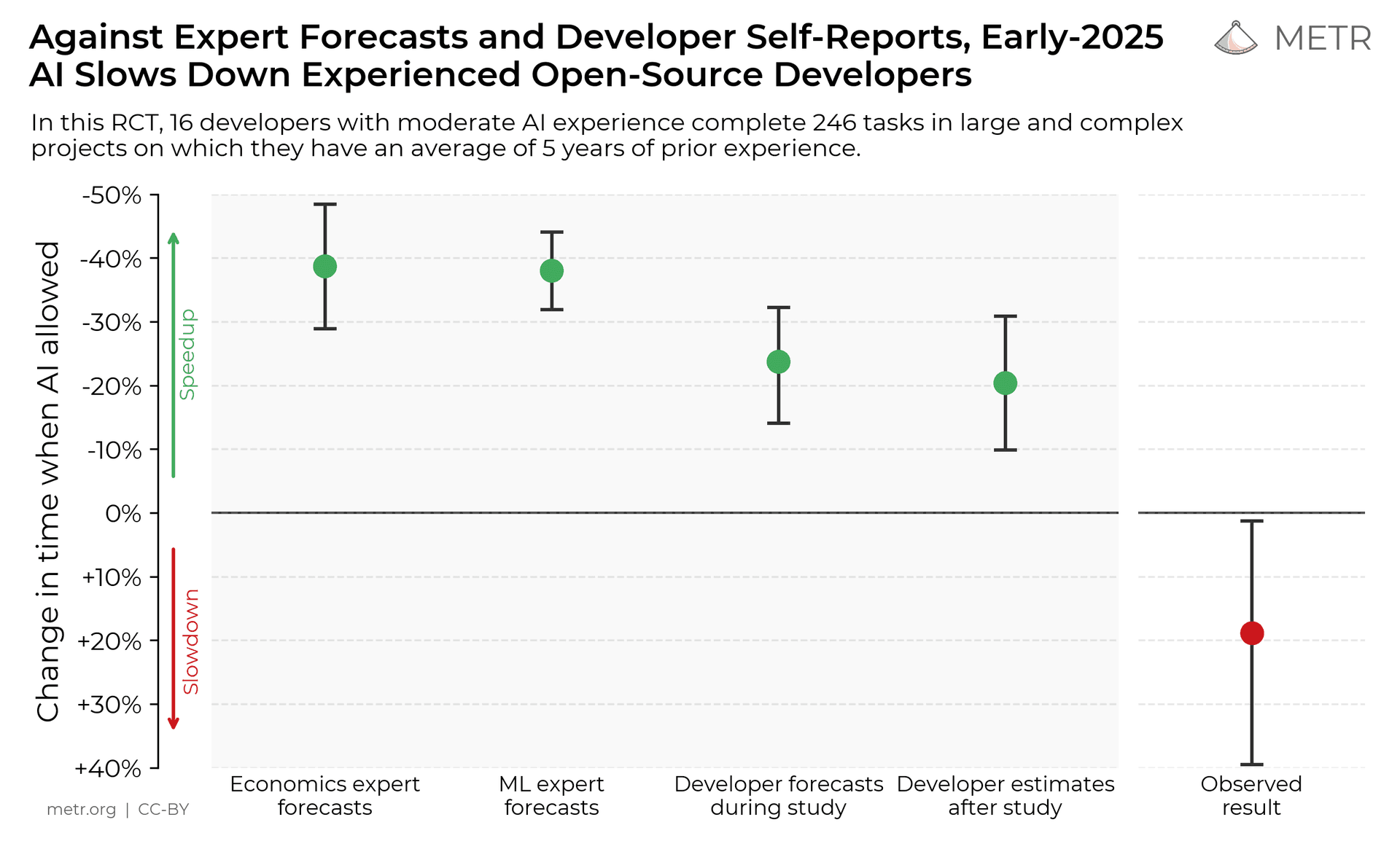

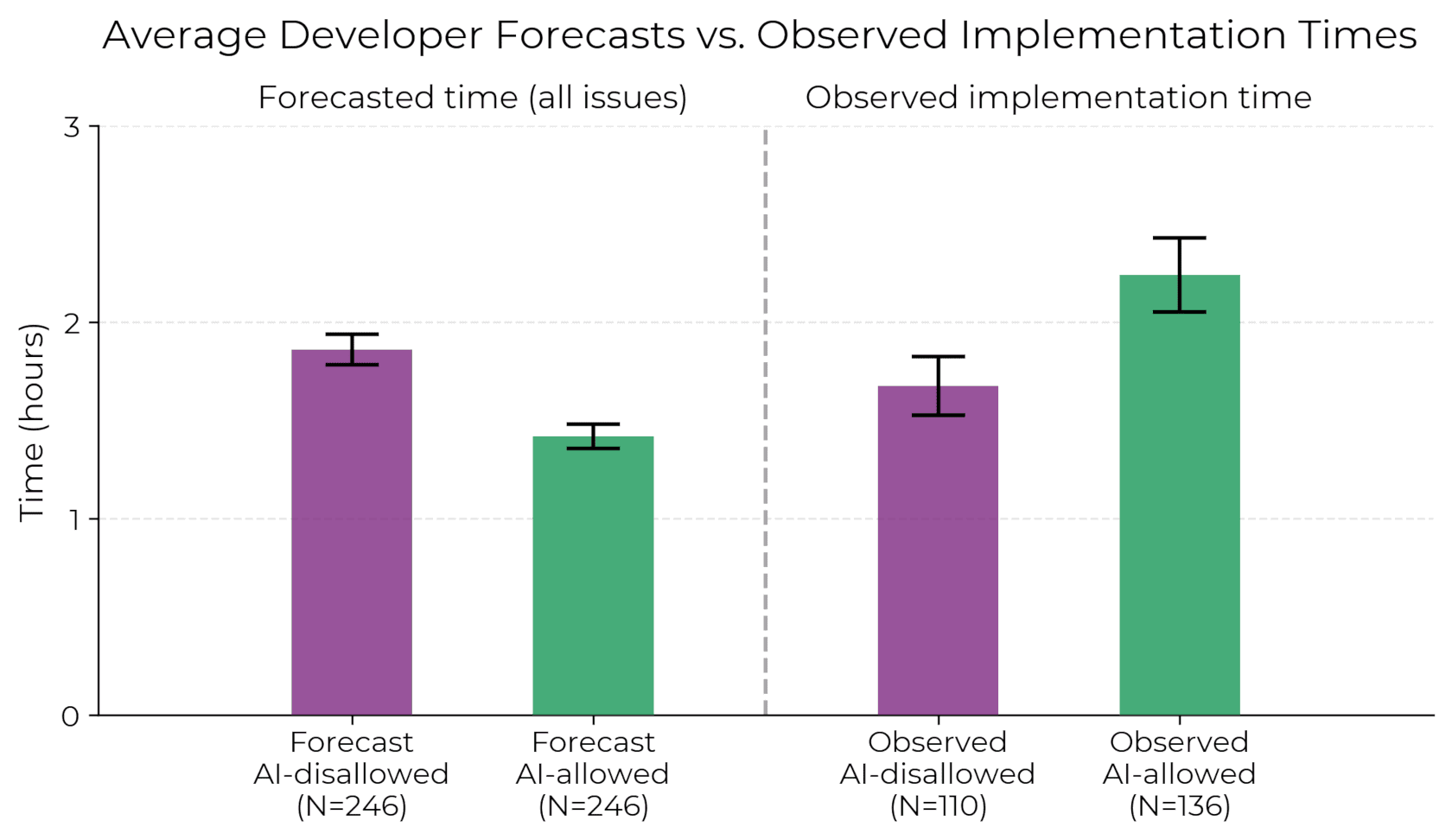

In July 2025, METR (Model Evaluation & Threat Research) published the results of a randomized controlled trial measuring how AI coding tools affect the productivity of experienced software developers. Sixteen developers with an average of five years of deep experience on their respective projects completed 246 real tasks. Each task was randomly assigned to either allow or disallow the use of AI tools. When AI was allowed, developers primarily used Cursor Pro with Claude 3.5 and 3.7 Sonnet – the leading models at the time.

The result surprised everyone: developers using AI were 19% slower than those working without it. Even more striking, the developers themselves didn't notice. Before starting, they predicted AI would make them 24% faster. After finishing – and actually being slower – they still believed it had sped them up by about 20%.

Source: metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study/

Why the slowdown? The researchers identified several factors. Developers accepted fewer than 44% of AI-generated suggestions. Much of their time went to reviewing, testing, and ultimately rejecting code that didn't meet the standards of a complex, mature codebase. AI tools performed worse in the kind of large, intricate projects where experienced developers do their most important work.

But here's what makes this study truly interesting for business owners: the researchers were careful to note that their findings applied to a specific context – senior developers working in codebases they knew deeply. AI likely helps more in unfamiliar codebases, for less experienced developers, or for tasks like scaffolding new projects.

And then the landscape shifted dramatically.

Just eight months later, in February 2026, METR announced they were fundamentally changing their study design. The reason? They could no longer recruit enough developers willing to work without AI, even at $150 per hour. The tools had improved so rapidly that experienced developers now considered them indispensable. METR's own data showed that developers with the highest expectations for AI's value were the ones dropping out of the study – creating a selection bias that made continued measurement unreliable.

This is the story in miniature: AI coding tools went from measurably slowing experts down to being something those same experts refused to give up – in under a year.

The takeaway isn't that AI replaces developer expertise. It's the opposite. AI is a force multiplier that rewards experienced judgment. The developers who get the most out of these tools are the ones who know when to use them and when to override them, who can evaluate AI-generated code against the deep context of a complex project, and who understand what "good" looks like before asking a machine to produce it.

This is the senior developer advantage. And it's exactly what makes small, experienced teams so powerful in the age of AI.

The Proof: Companies That Build This Way

The science points in one direction. But does the model actually work in practice? Three companies – none of them trillion-dollar tech giants – suggest it does.

37signals: 25 Years of Calm, Profitable Growth

37signals, the company behind Basecamp, HEY, and ONCE, has been the standard-bearer for small-team philosophy since 1999. With roughly 75 employees and zero venture capital, they've built and maintained three profitable products while their co-founder David Heinemeier Hansson created Ruby on Rails – a framework that powers thousands of applications worldwide.

Their philosophy is articulated across three books (Rework, Remote, and It Doesn't Have to Be Crazy at Work) and one core belief: small teams do the best work, not the most work. As their company page puts it: small is not less than – it's greater than, faster than, friendlier than.

They're not ignoring AI, either. Jason Fried has noted that AI agents "came alive" for their team in late 2024, and they used AI to build a HEY Calendar feature in 24 hours. But the tools serve the team's existing philosophy — they don't replace it.

Linear: A $1.25 Billion Company with 87 People

Linear, the issue-tracking tool used by OpenAI, Cursor, Ramp, and thousands of other fast-growing companies, hit a $1.25 billion valuation in June 2025. At the time, they had roughly 87 employees. That's about $12.5 million in value created per person – compared to the $1-2 million typical of most startups.

CEO Karri Saarinen has written openly about this approach. In a company blog post titled "The Profitable Startup," he argued that ten people before product-market fit should be a ceiling, not a target, and that deliberately slow headcount growth forces better hiring decisions and protects company culture. Linear is growing faster in 2025 than in 2024, staying profitable, and maintaining a culture of craftsmanship – all without the typical headcount explosion.

Zapier: $5 Billion on $2.68 Million in Funding

Zapier, the no-code automation platform connecting over 7,000 apps, may be the most capital-efficient tech company of its generation. They've raised a total of $2.68 million in venture funding. Their valuation: $5 billion. Revenue reached $310 million in 2024 and is projected to hit $400 million in 2025.

The company has been fully remote since its founding in 2011 and profitable since 2014. Eight of the first ten employees are still with the company. With around 800 people serving over 3 million users, Zapier embodies the principle that a disciplined, experienced team with the right tools can build a category-defining platform without the bloat.

What connects these three companies isn't just their size – it's their shared conviction that constraints breed clarity. When you can't throw headcount at a problem, you're forced to think more carefully about what to build, how to build it, and who should do the work.

The Tools: Stay Lean, Build Like a Giant

These companies made their strategic choices before the current generation of AI tools existed. Today, the toolkit available to small teams is extraordinary – and it's evolving at a pace that's difficult to overstate.

As of early 2026, the AI coding landscape has consolidated around a few major tools. Claude Code, Anthropic's terminal-native coding agent, reached $1 billion in run-rate revenue within six months of its public launch in May 2025 – faster than ChatGPT reached the same milestone. Cursor, an AI-powered IDE, hit $1 billion ARR in the same month. OpenAI's Codex launched a dedicated macOS application in February 2026. GitHub Copilot, with over 20 million users, has been adopted by 90% of Fortune 100 companies.

The Stack Overflow 2025 Developer Survey, which drew responses from over 49,000 developers across 166 countries, found that 84% now use or plan to use AI tools in their workflow, up from 76% the year before. Over half of professional developers use AI tools daily. AI-enabled editors like Cursor and Claude Code are among the fastest-growing development environments.

But the survey also revealed something that connects directly back to the METR study: developer trust in AI accuracy is actually declining. Forty-six percent of developers actively distrust AI output, up from 31% in 2024. Two-thirds say AI solutions are "almost right, but not quite." Nearly half report that debugging AI-generated code takes longer than writing it themselves.

This is not a contradiction – it's the whole point. These tools are enormously powerful, but they produce their best results in the hands of people who can evaluate, refine, and direct them with experienced judgment. The tools don't replace senior talent. They make senior talent dramatically more productive.

A small team of two or three experienced developers, equipped with the right AI tools and a clear understanding of the problem, can now produce work that would have required a team of twelve just a few years ago.

What This Means for Your Business

The science is clear: small teams produce more innovative, more disruptive work – and that was true long before AI entered the picture. The research confirms: AI amplifies experience and judgment, not just raw output. And the proof is visible across the industry: forward-thinking companies are building billion-dollar products with lean, experienced teams.

This isn't a trend or a theory. It's an operating model – one built on the principle that the right people, with the right tools, working with the right focus, will consistently outperform larger teams burdened by coordination overhead, communication complexity, and the slow drift toward safe, incremental work.

At Pieoneers, this is how we've always worked. Small teams of senior developers who bring deep experience to every project – and who now use AI as a genuine force multiplier. It's not about doing less. It's about doing more, with the precision and care that only comes from experience.

If you're thinking about your next build – whether it's a new platform, a product, or a system that needs to scale – we'd love to talk about what a small, senior team with the right strategy can do.

Update, March 4, 2026

In a recent interview with Dwarkesh Patel, Anthropic CEO Dario Amodei put specific numbers on the trajectory described in this post. Six months ago, he says, AI coding tools gave roughly a 5% total factor speedup. It didn't register. Today he estimates 15-20%, and it's just starting to become a factor. He also drew an important distinction: while AI now writes 90% of lines of code at Anthropic, the actual productivity gain is far lower. As he put it, compilers also write all the lines of software. Lines written is not the same as work done.

That trajectory reinforces the central point of this post: the value isn't in how much code AI generates, it's in the judgment of the people directing it. And that judgment compounds fastest in small, experienced teams.

Sources

Wu, L., Wang, D. & Evans, J.A. "Large teams develop and small teams disrupt science and technology." Nature 566, 378–382 (2019). doi.org/10.1038/s41586-019-0941-9

METR. "Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity." July 2025. arxiv.org/abs/2507.09089

METR. "We Are Changing Our Developer Productivity Experiment Design." February 24, 2026. metr.org

Stack Overflow. "2025 Developer Survey." survey.stackoverflow.co/2025

Anthropic. "Anthropic Acquires Bun as Claude Code Reaches $1B Milestone." December 2025. anthropic.com

Saarinen, K. "The Profitable Startup." Linear. linear.app

37signals. 37signals.com

Zapier company data via Sacra, SQ Magazine, and Wikipedia.

Dario Amodei on Dwarkesh Patel podcast. dwarkesh.com/p/dario-amodei-2

Olena Tkhorovska

CEO & Co-Founder, Pieoneers